The General Data Protection Regulation, in short GDPR, is just two more months away. I have been working on chatbots for a while now, and in the last months we seen quite some customers struggling with the new regulation and the impact on chatbots. I’ll therefore give you my humble take on it and how we tend to deal with it. Use it as inspiration, but make sure you get advice on your own situation before applying any of the recommendations, as I’m certainly not a GDPR expert, nor do I have a background in law.

On May 25th, the GDPR will become enforceable, which clarifies but also restricts the way companies and service providers can handle your personal data.

In order to understand the impact of this new regulation on chatbots we first need to understand what personal data is exactly. According to the regulation, personal data is any information that relates to an identified or identifiable living individual.

This is harder than it sounds, because different pieces of information, collected together, can lead to the identification of a specific person as well and therefore counts as personal data too. Think about a name and surname, a home address, an email address that includes name.surname@company.com, location data or an IP address.

This makes GDPR extremely relevant for chatbots, since the great benefit of chatbots is that it allows for highly personalized one-to-one communication. The whole idea of a chatbot, especially used as a virtual assistant, is to know all about its users and their context, in order to have meaningful conversations.

So let’s look at some of the measures you should take:

1. Be transparent about Personal Data

Make sure you know exactly which Personal Data you collect in your bot and why you need it; communicate about this clearly in your privacy policy. Ideally you notify your users upfront about the policy and give them an opportunity to read it through.

Our bot defaults, which all Botsquad bots automatically inherit at their inception, includes the following code:

@privacy entity(match: ["privacy", "gdpr", "personal data"], label: "privacy")

dialog trigger: @privacy do

invoke privacy

end

dialog privacy do

say "please read our privacy policy carefully at https://www.botsquad.com/privacy"

end

This way, bots will be able to present their privacy statement. By invoking the privacy dialog explicitly you can proactively inform about the privacy statement. Another way is letting your audience explicitly ask for it. Our default bot behavior supports both.

Try it yourself by opening the studio and choose one of the example bots, for instance the BaseBot. Then you replace main dialog with the following:

dialog main do

invoke hello

invoke privacy

invoke menu

end

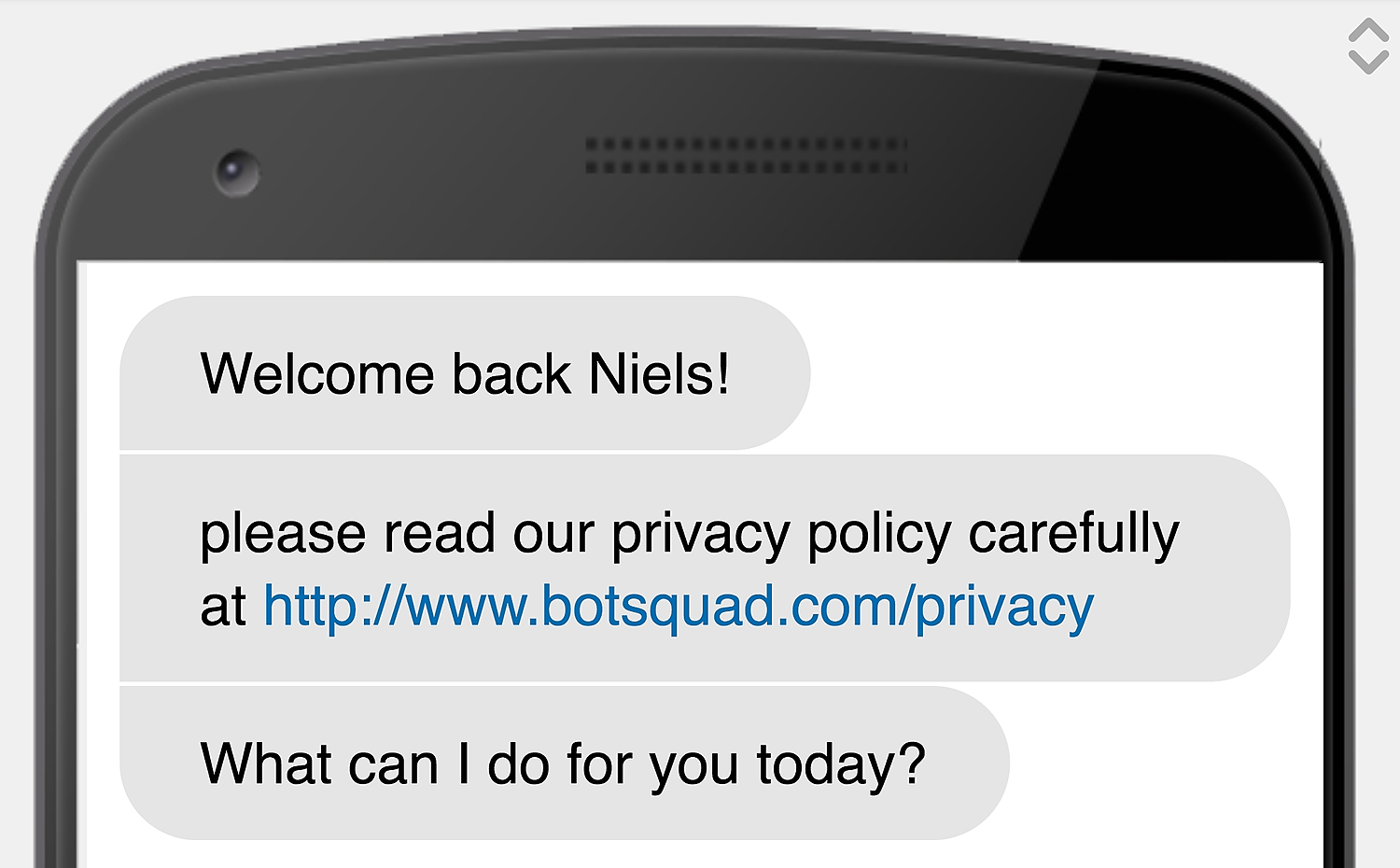

This script will behave like this:

Make sure you override the privacy dialog from defaults with your own dialog that points to your specific privacy statement. You can do this by creating the following dialog in your main script:

dialog privacy do

say "please read our privacy policy carefully at http://www.mydomain.com/privacy"

end

This will cause the privacy dialog in defaults to be overwritten by your version.

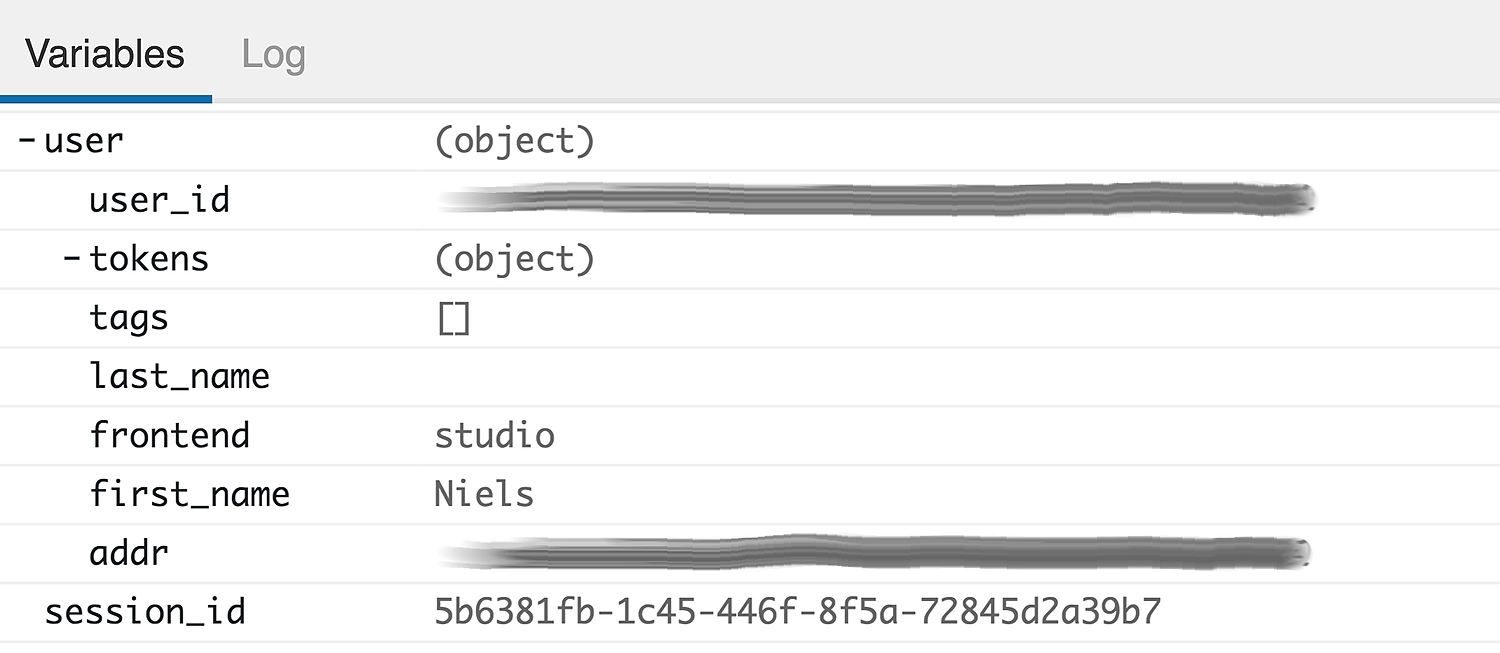

2. Store Personal Data separated and encrypted

We advice to store all personal data in a dedicated user object, that is stored encrypted and separated from other data. In Botsquad, the user object is used for this purpose. If you run your chatbot in the studio you can watch all collected attributes in the variables pane below:

Per default all data stored as attributes of the user object will be forgotten at session end, unless explicitly remembered using the remember function, see below.

user.first_name = ask "what is your name?"

remember user.first_name

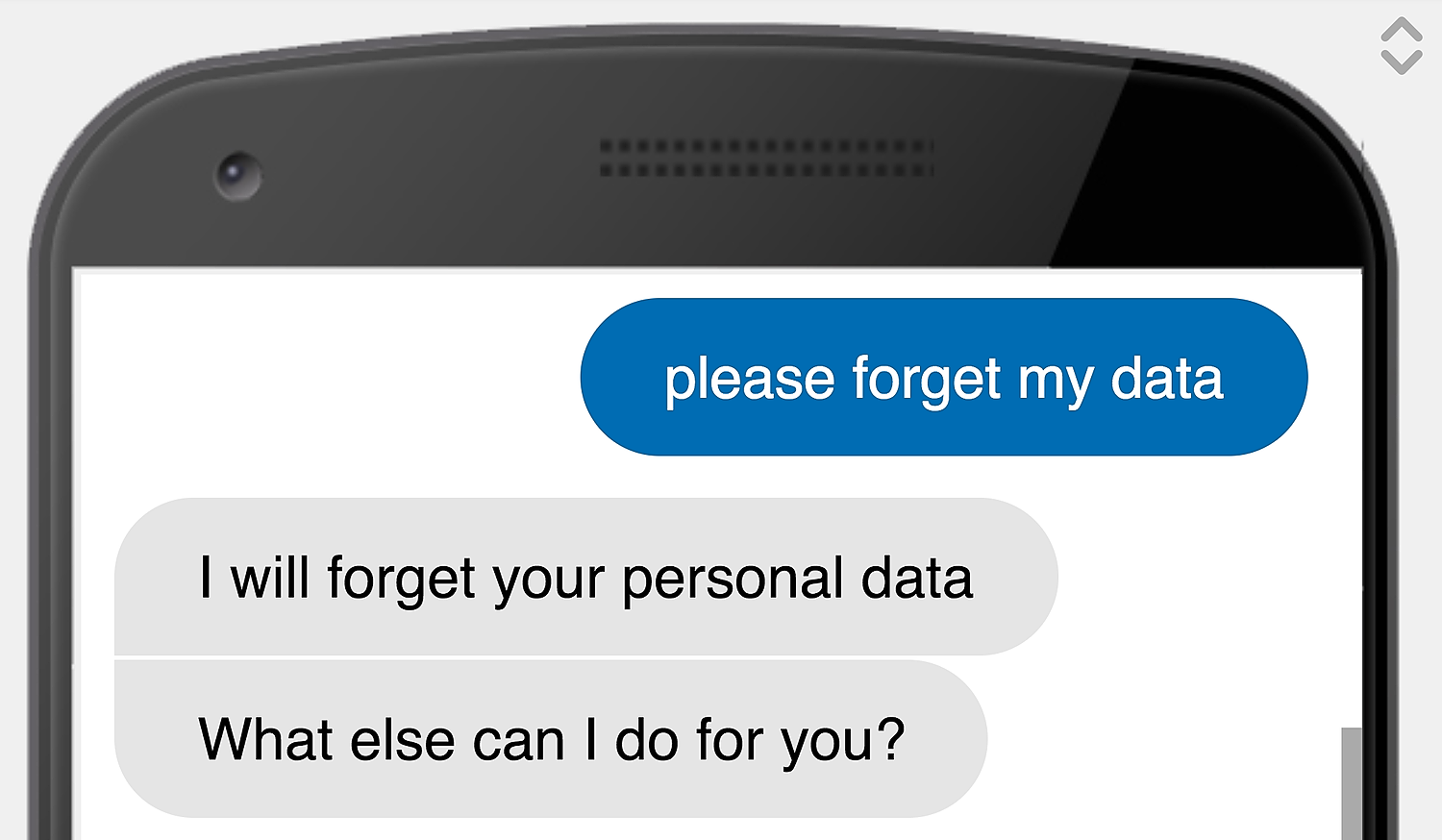

3. Allow users to have their data forgotten

According the GDPR, users should be able to have all their Personal Data be forgotten. Botsquad supports this behaviour using the forget function.

forget user.first_name

You could write a little dialog for this that gets triggered by the user typing something like ‘please forget my data’, ‘delete my personal data’, etc.

@forget entity(match: "(delete|forget)(.*)(my|personal)(.*)data")

dialog trigger: @forget do

say "I will forget your personal data"

forget user.first_name

end

4. Allow users to retrieve their data

Another obligation that comes with GDPR is for users to be able to retrieve their Personal Data. This can be done multiple ways. You could either build a dialog for this, that just presents the data to the user:

@mydata entity(match: "(show|get|present)(.*)(my|personal)(.*)data")

dialog trigger: @mydata do

say "This is what I remembered about you"

say user.first_name

say user.email

end

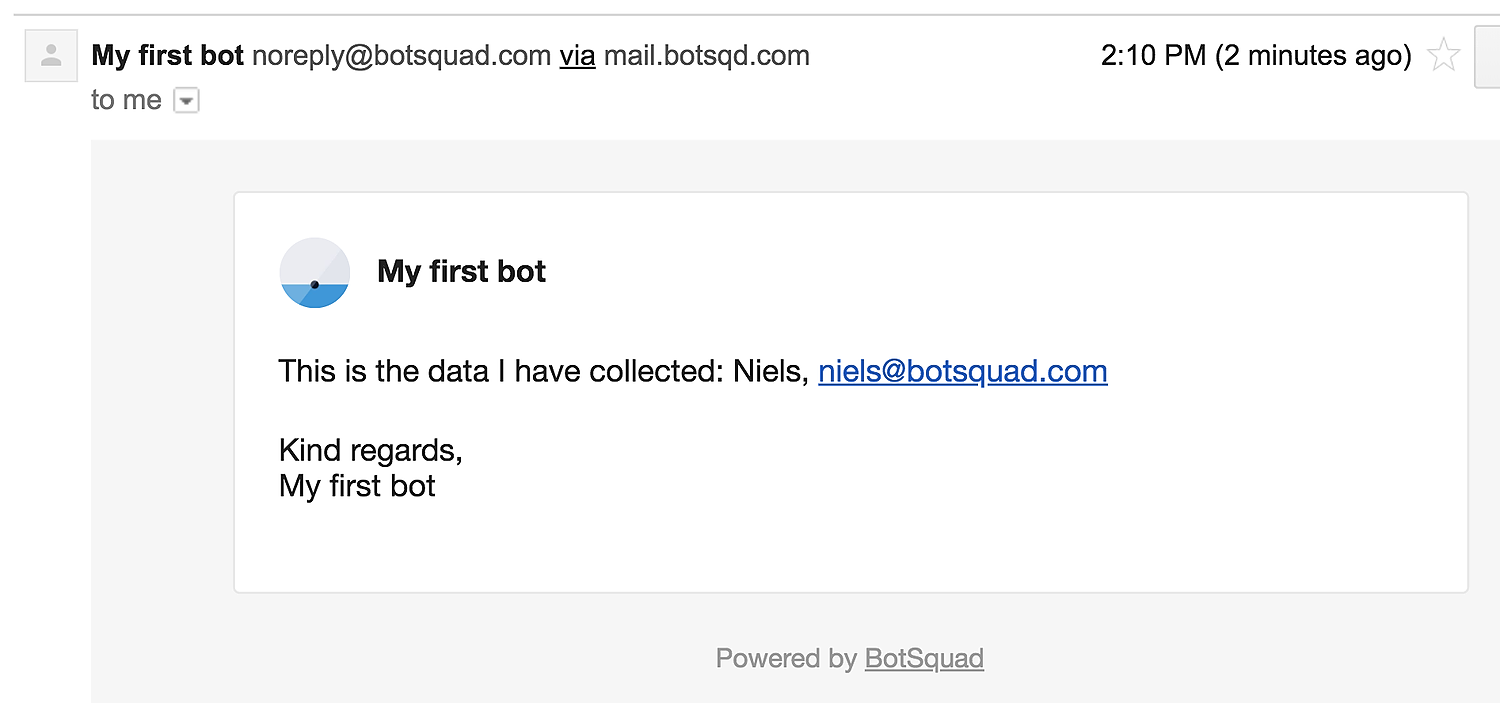

You could also choose to send the data per email, like this:

dialog trigger: @mydata do

mail user.email,

"your personal data",

"This is the data I have collected: " +

join([user.first_name, user.email], ", ")

say "I've sent you an e-mail with all personal data we have."

end

Which will send an email looking like this:

5. Privacy-first design

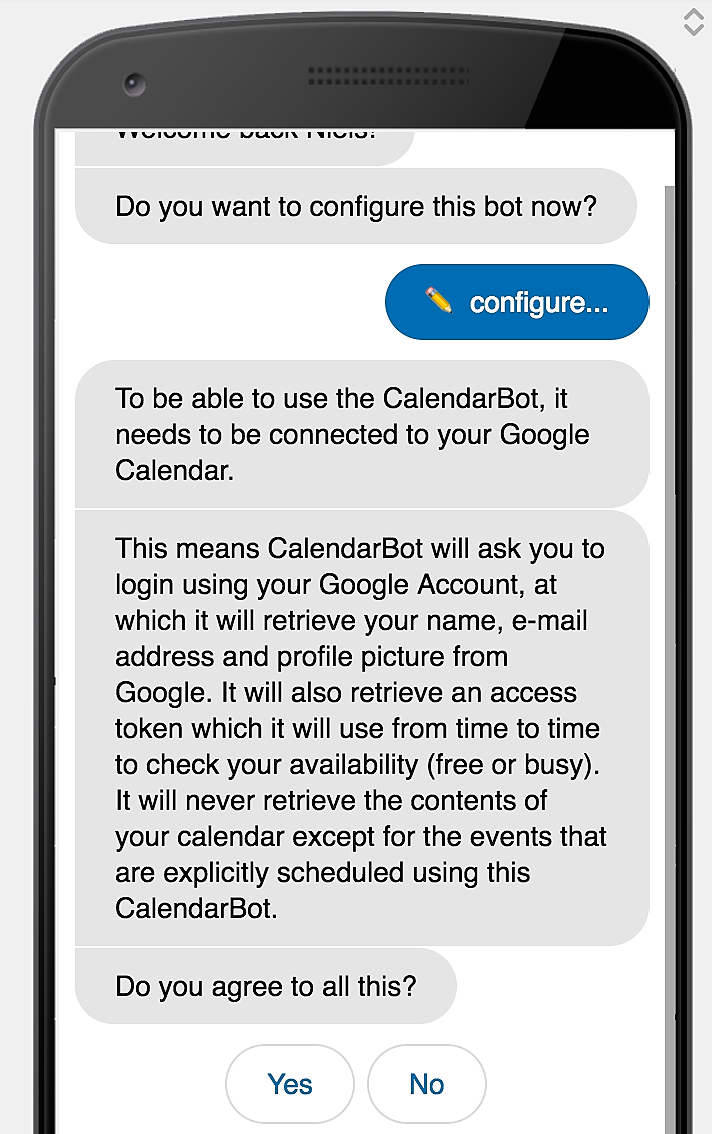

Apply and accustom yourself with a privacy-first attitude. There is nothing wrong with asking your users permission and explaining what you are doing while you are doing it. A chatbot is perfectly suited for this because you are always in a dialog with your users. For instance, the following code is used in our CalendarBot:

say "To be able to use the CalendarBot, it needs to be connected to your Google Calendar."

say "This means CalendarBot will ask you to login using your Google Account, at which it will retrieve your name, e-mail address and profile picture from Google. It will also retrieve an access token which it will use from time to time to check your availability (free or busy). It will never retrieve the contents of your calendar except for the events that are explicitly scheduled using this CalendarBot."

ask "Do you agree to all this?", expecting: [:yes, :no]

I hope this helps you how GDPR can be implemented in a chatbot or conversational app. Obviously I used our own platform for the examples, but you should be able to apply the same strategy in your own platform or tooling too.

If you have any questions or ideas, feel free to contact me directly.